Dr Pip Arnold, Karekare Education shares her thoughts

Aotearoa New Zealand is full of amazing teachers who everyday work with young people to make a difference in their lives. For the last 14 years I have been on a journey to unpack and make sense of various aspects of the statistical investigations strand in order to support teachers’ content knowledge so that young people in Aotearoa New Zealand have the best statistical grounding in the world.

Since the implementation of NCEA in 2001 posing questions has been part of the requirement for students who are showing evidence for statistical enquiry. The “question” should be checked by the teacher to make sure it will provide an opportunity for students to show what they know about undertaking a statistical investigation.

It was through working with a teacher in 2008 when looking at what statistical content knowledge was needed for teachers to support the implementation of the then new curriculum that I stumbled across my first problem with the problem: what makes a good statistical question?

In trying to answer this question a problematic situation (Simon, 1995) arose: What makes a good investigative question? Just naming the question (investigative) clarified a major confusion around question purpose. You see people were (and still are) confusing investigative questions with survey/data collection questions and seeing both as statistical questions. Actually, they are both statistical questions… but one is the question we ask of the data and the other is the question we ask to collect the data.

See my Doctoral Thesis Statistical Investigative Questions (Arnold, 2013) for more detail on that journey.

In the thesis I focused on summary and comparison investigative questions. That is what would the “problem” be for situations where the focus of the investigation was about one variable (summary situation) or the focus of the investigation was about a variable compared across groups (comparison situation). Key ideas are summarised below:

Criteria for what makes a good investigative question (Arnold, 2013; Arnold & Franklin, 2021)

- The variable(s) of interest is/are clear and available

- The population of interest is clear

If it is not a sample/population situation, then it would be that the group[1] of interest is clear

- The intent is clear, e.g., summary/descriptive, comparison, relationship, time series.

- The answer to the question(s) is possible using the data.

- The question(s) is one that is worth investigating, that it is interesting, and that there is a purpose.

- The question(s) allows for analysis of the whole group(s)[2].

The intent, variable and group/population of interest should be clear in the investigative question, the question should also be able to be answered with the data available/or can be collected, it should allow for analysis of the whole group and be worthwhile investigating.

Defining the population

If you have been involved with NCEA level 1 and the current achievement standard 91035 Investigate a given multivariate data set using the statistical enquiry cycle you know that defining the population is a problem. Yes, the second problem with the problem: How do we define the population that our investigative question is about.

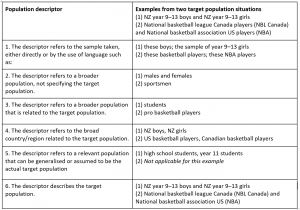

In my research working with many year 10 students I found that they had multiple ways of trying to define the population for the same dataset. In fact, in the 2008 post-test for my doctoral research, 22 of the students in the class had posed at least one comparison question about “being taller”. Across the 22 students there were 14 different populations or groups defined. This prompted a focus on how the different population descriptors differed and how this difference might help to inform progress towards the actual target population that needed to be defined. As a result of three research cycles the following progression of ideas about population descriptors was developed (Arnold, 2013).

Population descriptors (Arnold & Franklin, 2021, p. 6)

The progression of population descriptors is meant to help teachers with moving their students towards the descriptor that describes the target population. If teachers can identify the population descriptor their student is using, e.g., the broad country/region related to the target population, then they know that the next step is to move the student towards being more specific within that. For example, moving from NZ boys and NZ girls to NZ year 11 boys and NZ year 11 girls.

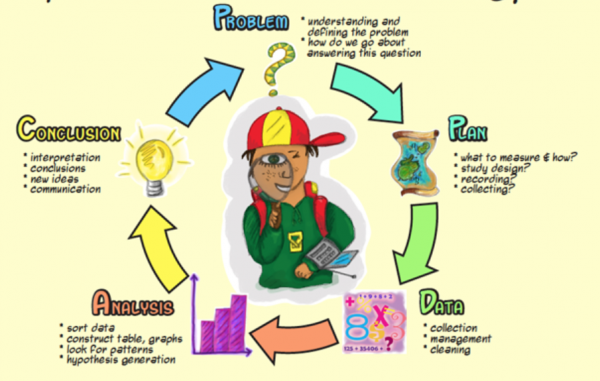

Over the last two years I have been researching and writing a book on Statistical Investigations[3]. The aim of the book is to support teachers around statistical content knowledge for years 1-11, curriculum levels 1-6. The book is structured specifically to support the PPDAC cycle and in looking at what makes a good investigative question for the book I struck my third problem with the problem: do the criteria developed for what makes a good investigative question for summary and comparison situations work/hold up when applied to relationship and time series situations?

The short answer is yes they do, and while there is more information on this in the book, in a nutshell:

- For relationship situations – the only thing to note is that we are only looking for the group of interest (we don’t do sample/population inference for relationship situations within these curriculum levels)

- For time series situations – looking at how we define the group of interest, how does this apply in a time series situation? Here we would be describing the “who” and the time period. For example, What is the pattern of the monthly number of two-litre ice-cream packs sold in New Zealand from 2014-2020? The variable is the monthly number of two-litre ice-cream packs sold in New Zealand; the “group” of interest is two-litre ice-cream packs in New Zealand across 2014 and 2020.

And now we have a review of achievement standards (again) and it seems that the problem is with the problem – the investigative question has been a real sticking point for students achieving 91035 because of the requirement for the investigative question to be “right” and a pedantic approach that has arisen due to many factors for the awarding of the statistics achievement standards; no holistic judgement allowed here.

Information I have read about the new MS1.1 achievement standard, suggests that the investigative question will not be required for assessment, but a purpose statement will be part of the evidence.

While I mourn the potential loss of requiring an investigative question within assessment evidence, giving a purpose statement, or more generally a purpose is actually the same. The six criteria for what makes a good investigative question also hold for what makes a good purpose for a statistical investigation.

The purpose drives the nature of the statistical analysis that is done.

- The purpose identifies the variable(s) of interest.

- The purpose identifies the who we will collect/source data about (the group or population)

- The purpose identifies the intent (summary, comparison, relationship, time series situation)

- The purpose must be able to be realised with data that can be collected or sourced.

- The purpose should be about the whole of the who (the group).

- The purpose should be meaningful and interesting.

The intent, variable and group/population of interest should be clear in the purpose, the purpose should also be able to be answered with the data available/or can be collected, it should allow for analysis of the whole group and be worthwhile investigating.

The problem with the problem is that we have let it become a critical object in the evidence for 91035. The problem isn’t with the investigative question or with the purpose, the problem is with the way that judgements are made about evidence.

I strongly support the movement towards a more holistic approach to the judgement of evidence for assessment for qualification purposes.

I worry that in our rush to fix the assessment issue we lose important stuff, important stuff that teachers are already doing well with students in the teaching and learning of statistics.

Footnotes

[1] Population for summary and comparison situations (from curriculum level 5); group for relationship situations; group for time series situations (for all curriculum levels 3-6)

[2] Group refers to the whole available dataset for analysis. This means the question should not be about an individual – person, time, object e.g. Who is the tallest? What is the hottest month? Both questions are not investigative questions because amongst other things they do not consider the whole group, they are analysis questions.

[3] Beeby Award – due for publication in 2022. Working closely with Associate Professor Maxine Pfannkuch and Associate Professor Tony Trinick.

References

Arnold, P. (2013). Statistical Investigative Questions – An Enquiry into Posing and Answering Investigative Questions from Existing Data, (Doctoral thesis), Retrieved from https://researchspace.auckland.ac.nz/handle/2292/21305

Arnold, P., & Franklin, C. (2021). What Makes a Good Statistical Question?. Journal of Statistics and Data Science Education, 1-11.

Simon, M. A. (1995). Reconstructing mathematics pedagogy from a constructivist perspective. Journal for Research in Mathematics Education, 26(2), 114–145.

Wild, C. J., & Pfannkuch, M. (1999). Statistical thinking in empirical enquiry. International Statistical Review, 67(3), 223–265. doi: 10.1111/j.1751-5823.1999.tb00442.x

Image credit : Census at School